9 million hits/day with 120 megs RAM

Here’s a quick summary if you haven’t time to read the whole thing:

Solaris 5.11 (virtual: Joyent SmartMachine)

PHP 5.3.6 with PHP-FPM: 4 instances running, 10meg APC cache

nginx 0.8.53

Pax 1.0 (my silly self-coded website software… and yes, oops there’s already software with that name)

120 megs of RAM used

Load tested using blitz.io: 9 million+ daily hit capability

The point: I’m not doing anything exotic. I’m doing this as a hobby. This type of performance should be the rule, not the exception, for small websites. Many sites need some improvement to get to that point.

History

My site has only been linked to by John Gruber’s Daring Fireball twice. In 2007, I wrote a piece about Wozniak’s Prius’ top speed. In 2009, I wrote about the sad state of statistical analysis in tech journalism.

He even liked my site’s design! Geek excitement! Sorry. Anyhow…

While Mr. Gruber’s site does tend to crash those he links, my server was thankfully spared the full onslaught of the Daring Fireball audience — the topics I addressed were minor, transient little additions to the dialog between Mr. Gruber and his readers. So, I survived those bursts of traffic. But early this year, I got to thinking: what if my muse humored me and I actually produced something popular? Could my server get the required number of pages onto people’s screens without melting or exploding?

So, in January, I began to refocus my coding efforts on the software powering this website.

Thank You, Shopify

My first goal was to get my PHP execution time down into the realm of Daring Fireball’s. If you pull up the markup on DF’s front page, you’ll notice a commented bit about how long it took to produce:

<!-- 0.0003 seconds -->

After checking this for about 9 months, I can tell you this almost always reads that number: 300 microseconds. This is about one third the time a camera flash illuminates. That’s, well, pretty quick. When I started, my software was taking about 0.25 seconds (250 000 microseconds) to produce the front page of my website. I needed to improve performance by over 800x.

I had already written a nice little PHP class to cache using either APC or memcached, but I had been stymied by how to expire things correctly. Doing this for a hobby, and therefore not being steeped in the best practices of caching, Tobias Lütke’s article The Secret to Memcached hit me like a FREAKIN’ THUNDERBOLT:

At the beginning of each request we load a shop object which we pick depending on the incoming host name. We use the fact that we always load this shop model anyways and add versioning to it. This version column is incremented every time we want to sweep all caches.

AHA. And it works beautifully. Whenever anything in the DB is updated, I update the cache by incrementing that version number; because it is incorporated into all cache ids, all cache ids change. Expired cache items are never explicitly marked as such, they are simply no longer accessed and rotated out when the cache fills up.

Of course, in retrospect, it makes sense to let the cache itself manage rotating out expired items, but it took me a while to realize that. And of course, you don’t understand something until you think it’s obvious. Anyhow, requests that come in while the versioning is being updated still load the stale version. A new cache id is produced because the incremented version number is hashed in.

My blog is quite light on input… and traffic, so worrying about cache stampedes is a bit much right now. After a few weeks running, PHP’s APC gives a nice hit/miss ratio:

This caching (I’m using APC right now) got my page load times down to about 170 microseconds for most pages, and 400 microseconds for the front page, which takes some time to set a cookie or two. The reason for those cookies follows.

Faking Dynamic Features Using Inline Caching

The title of this section could be yet another preposterous acronym: FDFUIC. What it means is, caching can be aggressive but still deliver dynamic features to your visitor.

This was the challenge: I wanted to give each user a personal update on what was added to my website since they last visited. But I wanted to cache only one version of the front page… and serve it to everyone. These two goals seem mutually exclusive. They aren’t. Here’s the solution (scalability notes after the implementation):

- PHP: set a cookie recording the time of the user’s visit. Set it to expire in X days.

- PHP: when assembling the front page, prepend it with all the comments (hidden from view) left on the site in the past X days. My X value for this site is 60 days.

- PHP: add date information in the ISO8601 format to each hidden comment:

<time datetime="2011-08-27T19:15:38Z" pubdate style="display:none"> - JavaScript: load the cookie saved in (1).

- JS: inspect all comment nodes. If the

<time>of a node is after the cookie time, then change the style to make that comment visible. - JS: discard the comments with

<time>s before the cookie time. - JS: check the other items on the front page (continue through the DOM and check each

<article>node). Mark nodes with a red dot if their<time>s are after the cookie time.

Interesting tidbit here. I actually used a modified version of John Resig’s “Pretty Date” code snippet, one he put together to live update time on nodes in a twitter clone he was thinking about. The final function I ended up with is available here.

An image follows to explain how it all comes together:

So, if you want to show someone what is new since they have been gone, your first instinct may be to do it dynamically. My point here: for small sites, that’s not always the best solution. Here, we use the fact that the site is small to our advantage: we can easily prepend 60 days worth of comments, but we don’t have too much spare processing power or RAM to dynamically assemble the front page for every user AND maintain robust performance.

Scalability note: if you have a higher traffic website, perhaps you should only set the expiration time to 5 days. Then you won’t be prepending your front page with a lot of unnecessary data/comments (from the other 55 days). If the user visits less frequently than every 5 days, well, then they have a lot to catch up on anyway, and you might as well not overload them with new stuff.

Trial Run

This past May, Hacker News picked up a long piece I wrote about my efforts to improve infinite scroll. I was thrilled that it was pretty popular. I was thrilled my server didn’t melt! However, it came close.

I checked my running processes and found that Apache’s MaxClients parameter was not at all the right fit for my little 256-megs-of-RAM server.

nginx, PHP-FPM

After a few days of research, I installed nginx and PHP-FPM. Unlike the Apache client explosion that happened under load, I get much better control over processes with this set-up. PHP-FPM is set to a max_children of 6 and as I write this has 4 processes running.

nginx, of course, is a beast (in the best possible way: rock solid, low memory usage).

A little tidbit about how PHP & nginx communicate: instead of using a port (with the corresponding overhead), nginx is communicating with PHP over a Unix socket. The relevant part of the config files are as follows.

PHP-FPM:

listen = /tmp/php5-fpm.sock

nginx’s PHP location block:

fastcgi_pass unix:/tmp/php5-fpm.sock;

Fast fast fast.

Memory Use

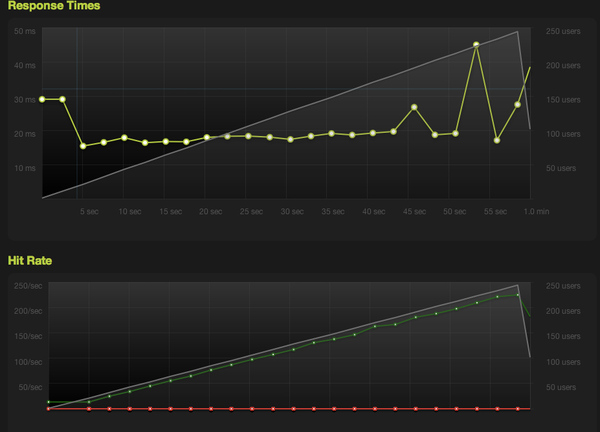

With a little prstat -Z -s size (remember, this is Solaris), my RSS is currently at 115megs. I’ve run the following rush at blitz.io:

--pattern 1-250:60 -T 4000 -r california http://tumbledry.org/

Yes, the timeout is increased. Give me a break: I can’t make miracles!

I never knew servers could be this efficient. I have a lot to learn.

Comments

Guillermo Garron +2

DF uses Movable Type to blog. Thus he has static pages with no PHP code.

Guillermo Garron

Sorry for posting again: I forgot to add: - Thank you for this great post, you have achieved almost the performance of a static site using dynamic PHP.

zob

apache/php is actually faster than nginx because apache has mod_php and its way, way faster than fcgi its also faster if you put nginx in front of that for caching, because nginx is much faster than apache-prefork at serving simple content

finally, apache-event/mod_php is about as fast as apache-prek/mod_php+nginx but its not very stable, due to php’s issues with threading.

yeah i know, reading the web’s hype doesnt always give you the right answer about that sort of stuff, since 99% of the articles are written by people that aren’t actually knowing what they’re doing ;-) (sad!)

Joshua Kehn +1

Very nice. I dealt with PHP for my own site before moving straight to static files. Cleaner, simpler, and I don’t have to worry about security. Of course, this means I don’t have comments or any other dynamic features either.

Luke

@zob every time Apache loads, even to serve an image, it has to load mod_php. This adds a very large memory overhead. Alex states he’s using FPM, baked into PHP as of 5.3.5. FPM is a vastly improved version of fcgi and allows the PHP processes to be running continually, without the necessity of loading each time.

Overall, Apache can’t hold a candle to Nginx (or indeed any of the recent wave of efficient servers). The main benefit is the near linear memory usage compared to Apache.

Philip Tellis

Pretty cool. A few questions though. How much CPU does this use? In my experience, when you increase concurrency, CPU rather than RAM becomes the bottleneck. Secondly, why did you pick ISO8601 over a unix timestamp? Wouldn’t it have been easier to compare timestamps (numeric comparison v/s string comparison)?

Also, since you’re doing the date comparisons in JavaScript, did you consider setting the cookie in JavaScript as well? All you’d need is the timestamp of the most recent comment (and not the current time), and you already have that in the page.

Dimitry

@Philip Tellis: using ISO8601 date string lets us do a lexicographical sort. It’s not as fast as numeric sort, but a lot more readable ;)

finance

Nice try. So it is really about cache efficiency then.

kowsik

Alex, thanks for the plug on http://blitz.io - drop us a note and we’ll get you a one-time +250 blogging credit so you can rush with higher concurrency! @pcapr

Ryan Voots

@Dimitry: He shouldn’t be needing to do a sort to do the comparison to determine if a comment is newer than the last time they were there. It should be possible to do it with a single < comparison if you’re using a Unix timestamp.

@Phillip: What I’d suspect is happening is that he gets the ISO-8601 directly from the database and uses that to reduce processing time on the server. Pushing it off to the clients instead.

ur

That’s what happens when you implement caching the right way, well done. Just for reference, i get the same result from a small linode serving static files using the internal nginx cache, you can be happy with your configuration :)

xelnyq +1

great informative post, im also using nginx but with fastcgi; is your cms open source by any change?

jrm

oh and btw if you would do an article on how to implement all that youre talking about it would be golden

Ian Wright +1

Great post Alex. After seeing your results I’m going to have to try and use more caching to improve my site’s speed.

luke holder

I you want a static site, but want to keep commenting, try a third party commenting system like disqus. Its an iframe, and will not slow down the article delivery.

bits

I think you mean milliseconds (ms), not microseconds (μs)…

Joshua Kehn

Mind running

—pattern 1-50:2,50-250:3,250-250:55 -T 5000 -r california http://tumbledry.org/

and posting the results? I’m trying to compare it against my own benchmark.

Michel Bartz +2

@Luke : If you load mod_php to serve images, that’s your fault more than Apache’s, just learn how to use it before blaming it (like a lot of people tend to do). @zob is very right in is comment, i consider migrating one of the site i was managing to nginx+fpm, the website in question was doing 110Million hits/day, and nginx didn’t perform better than a properly configured Apache, as well as a well architectured system.

I’d recommend that you look into a solution like Varnish, i use it heavily and it’s a monster, on a much higher level than Nginx will ever be. (don’t get me wrong nginx is good, just not good for PHP…).

As for keeping things dynamic, JavaScript and asynchronous loading of the dynamic parts is one solution, or if you turn to a solution like Varnish/Squid you can even give a shot at ESI.

But still, nice performances there.

Intesar Mohammed

9 million per day, basically it comes down to 104 requests/second which is just ok

Nicolas

@Intesar Mohammed

Yeah but on real word usage it like to not have a constant load. At peak you could expect much more. But concern for performance isn’t to serve a basic blog, but more a real full blown application (think gmail) where caching is more complex and provide less gains.

The solution provided here (send more content than necessary and let js perform the processing) could come with a little bandwidth overhead too (and thus increase transfer time). Couting a peak of 500req/second and 50kB per gziped page, it is already 195Mb/s without the protocol overhead.

So theses stats are good for CPU/server requirement but do not solve every possible problem.

Still very impressive and congratulation to Alex.

macem

wow nice article. btw, there’s also a pax command on IBMs AIX thats been around for ages :) http://publib.boulder.ibm.com/infocenter/aix/v6r1/index.jsp?topic=%2Fcom.ibm.aix.cmds%2Fdoc%2Faixcmds4%2Fpax.htm

dalesaurus

Excellent walk through for low memory web server setups. Another web server to check out is Cherokee, if you’re looking for something memory thrifty. http://www.cherokee-project.com/

Alexander Micek

Thanks for the thoughtful and informative comments, everyone. A few responses:

@Philip Tellis

CPU: I haven’t had any issues; I believe this could be because Joyent’s SmartMachines allow resource bursting, which includes CPU.

ISO8601: I used this date format because it makes the

datetimeattribute of my HTML5timetag valid. You can read more about that here. I do have to do some minor converting with the JS to get to Unix time, but it works.@kowsik

No, thank you for <blitz.io>! Once I get some more free time, I’ll be sure to contact you fine folks.

@xelnyq

I’ve had this question before — I’d like to make my CMS open source; I’ll get to that!

@jrm

Your best bet would be to take a look at my JS source, linked in the article.

@bits

Nope, I really do mean microseconds.

@Joshua Kehn

I’ll do that sometime; looks like your site is quite robust, with an extremely high capacity!